Software development is entering a new phase where AI-assisted development tools are embedded directly into everyday coding workflows. Tools such as GitHub Copilot, CodeWhisperer, and AI-powered IDEs can generate code snippets, documentation, and even entire functions within seconds. What once required hours of manual coding can now be completed through prompts and intelligent suggestions.

This acceleration is reshaping how teams build software. Yet the same systems that boost speed also introduce new operational risks: security vulnerabilities, incorrect logic, and the silent accumulation of technical debt. The challenge for modern engineering organizations is no longer whether to adopt AI coding tools, but how to govern them responsibly.

Why AI Assisted Development Is Redefining the Modern AI Developer Workflow

The rapid rise of AI assisted development is largely driven by its impact on developer productivity. AI coding tools function as intelligent collaborators embedded directly into integrated development environments (IDEs). Instead of searching documentation or writing repetitive code from scratch, developers can prompt AI systems to generate solutions instantly.

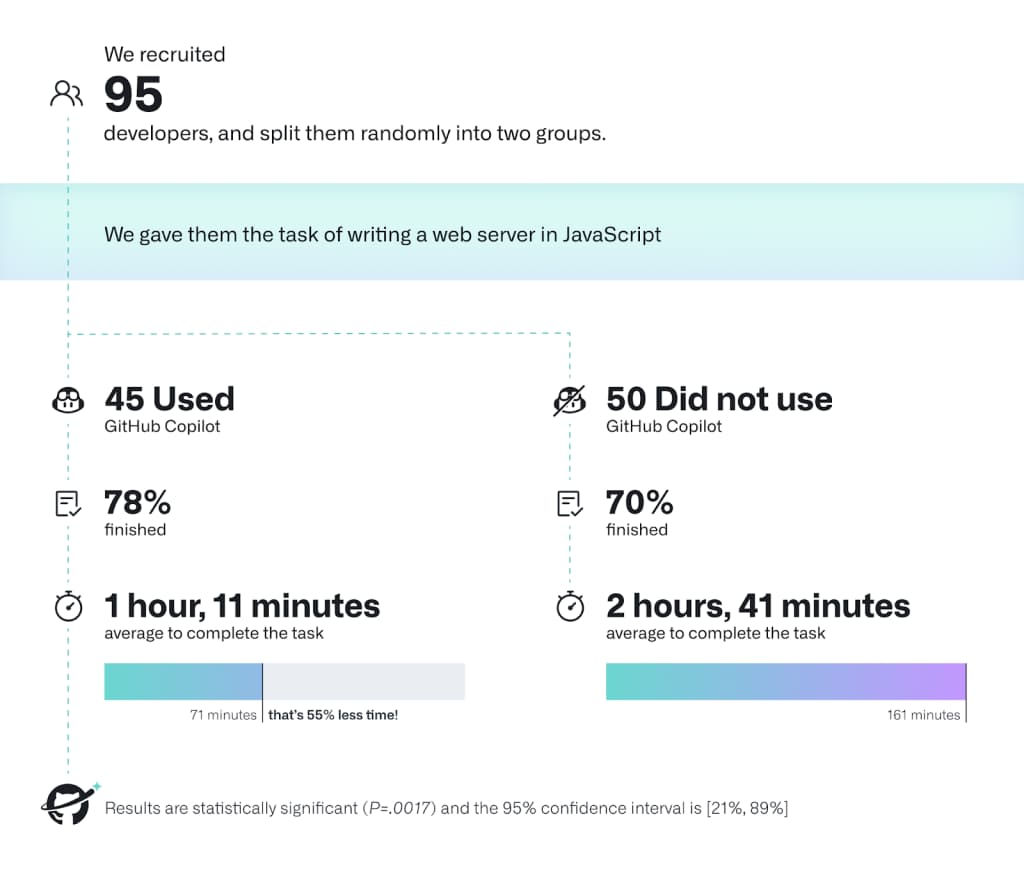

Early research has shown that the productivity gains are real. A controlled experiment conducted by researchers from Microsoft and GitHub found that developers using GitHub Copilot completed programming tasks 55.8% faster than developers working without AI assistance. These gains appear across several layers of the development workflow.

AI coding tools improve developer productivity and task completion speed (Source: GitHub Copilot Research Study)

One major advantage is the automation of repetitive engineering tasks. Many software projects contain large volumes of boilerplate logic such as API endpoints, configuration files, and data validation routines. AI coding tools can generate these structures in seconds, allowing engineers to concentrate on architecture and problem-solving rather than syntax.

Another major shift occurs in how developers explore solutions. Instead of implementing one approach at a time, engineers can prompt AI tools to generate multiple alternatives and evaluate them quickly. This dramatically shortens iteration cycles and encourages experimentation.

The impact on developer onboarding is also significant. Engineers joining a new project often need weeks to understand unfamiliar frameworks and internal coding patterns. AI coding assistants can generate contextual examples and explanations, helping new developers ramp up faster.

Industry adoption data suggests that these productivity improvements are already influencing how teams work. According to GitHub’s global developer survey, over 92% of developers are now using AI coding tools in some capacity, while 70% report that AI assistance improves their coding efficiency. However, productivity improvements in the AI developer workflow do not automatically translate into better software systems. As AI tools begin generating larger portions of application code, new forms of engineering risk are emerging.

The Hidden Risks of AI Assisted Development

While AI assisted development accelerates coding speed, it also introduces new categories of engineering risk that traditional development processes were not designed to handle. The most widely discussed issue is security. AI models generate code based on patterns learned from large public repositories. Unfortunately, these repositories contain both secure and insecure implementations. When an AI system suggests code, it may unknowingly replicate vulnerable patterns. A large-scale study examining AI-generated code found that approximately 40% of AI-generated code samples contained security vulnerabilities, including improper input validation and insecure authentication logic. The risk becomes particularly serious when developers assume AI-generated code is correct simply because it compiles successfully.

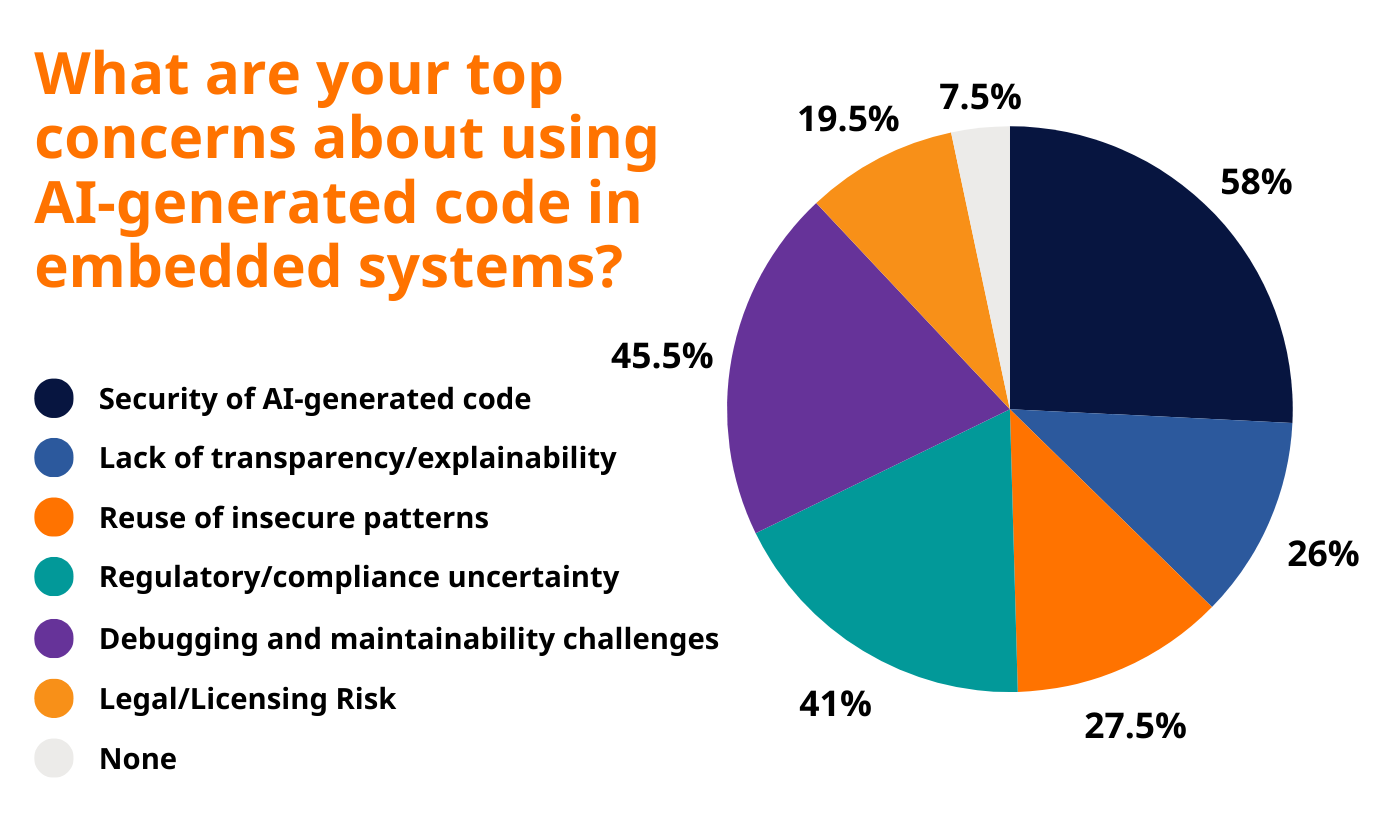

Risks developers report when using AI generated code (Source: Embedded Systems Survey)

Logic errors represent another major challenge. AI systems are optimized to produce syntactically correct code that resembles common patterns. However, they often lack a deep understanding of the business context in which the code will operate. As a result, generated functions may fail to handle edge cases, concurrency scenarios, or complex data dependencies. These issues may not appear during initial testing but can surface later in production environments where real-world workloads reveal hidden flaws.

Another growing concern is AI-generated technical debt. Software architecture relies on consistency, modularity, and long-term maintainability. AI coding tools, however, optimize primarily for immediate code completion rather than architectural coherence. As different developers rely on AI suggestions, codebases can gradually accumulate inconsistent design patterns, duplicated modules, and poorly integrated dependencies. Over time this creates structural complexity that slows down future development. Research from the University of Cambridge examining the impact of AI coding tools on software quality suggests that while developers write code faster, they may spend more time reviewing and correcting AI-generated output, shifting effort from writing code to validating it. In many cases, teams only realize the long-term cost of this acceleration when maintainability begins to degrade. The tension between faster code generation and sustainable architecture is becoming a recurring challenge in modern engineering workflows, particularly as AI coding tools encourage rapid iteration. This trade-off between development speed and long-term maintainability is explored further in this analysis of the core conflict behind vibe coding.

Building Safe AI Assisted Development

For engineering leaders, the key question is not whether to adopt AI assisted development, but how to deploy it safely within modern software delivery pipelines. Organizations that successfully integrate AI coding tools typically introduce additional governance layers into their development process. These controls help ensure that productivity gains do not come at the cost of security or maintainability.

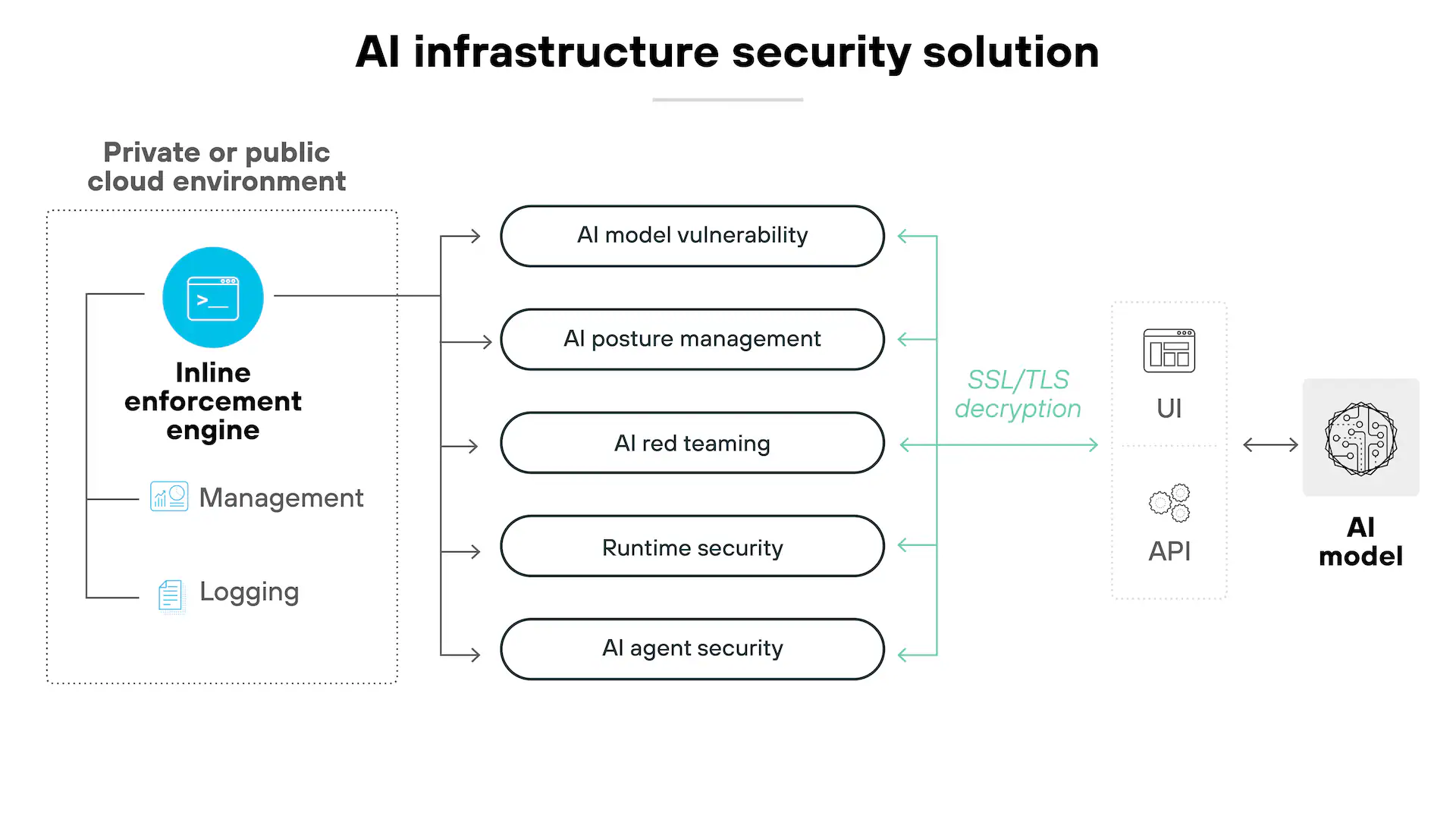

AI infrastructure security architecture for safe AI-assisted development (Source: AI security architecture overview)

One of the most effective safeguards is adapting code review practices for AI-generated code. Traditional peer reviews often focus on style consistency and functional correctness. In AI-assisted environments, reviewers must also look for patterns commonly introduced by AI tools, including insecure authentication flows, inefficient queries, and duplicated logic.

Automated testing also becomes significantly more important. AI-generated code should always pass through comprehensive validation pipelines including unit tests, integration tests, and static security analysis. Automated scanners can detect vulnerabilities such as injection risks or unsafe dependencies before the code reaches production. Static analysis tools have become increasingly important in this context. According to the IBM Cost of a Data Breach Report, vulnerabilities introduced during development remain one of the most common root causes of security incidents. Automated scanning integrated into CI/CD pipelines can significantly reduce this risk.

Another emerging best practice involves establishing internal governance policies for AI coding tools. Some organizations restrict the use of AI for security-sensitive modules such as authentication systems or cryptographic implementations. Others require that all AI-generated code be clearly labeled in commits so that reviewers can inspect it more carefully.

Forward-thinking engineering teams are also beginning to track metrics related to AI-generated code. These include the percentage of generated code that requires modification, the number of defects introduced by AI suggestions, and the long-term maintainability of AI-generated components. Monitoring these metrics helps organizations determine whether developer productivity AI gains are real or merely shifting engineering costs into future maintenance work.

Conclusion

The rise of AI assisted development is reshaping software engineering workflows. AI coding tools can dramatically accelerate coding tasks, allowing development teams to prototype, iterate, and ship software faster than ever before. Yet the same technology that increases development speed can also introduce new categories of risk. Security vulnerabilities, hidden logic errors, and accumulating technical debt are becoming common side effects of unstructured AI adoption in software teams.

For CTOs and engineering leaders, the goal should not be to slow down AI adoption but to guide it through structured development practices. Strong code governance, automated testing pipelines, and disciplined code review processes ensure that AI tools enhance developer productivity without weakening system reliability.

Organizations that build these safeguards early will gain a sustainable advantage: the ability to scale engineering output while maintaining secure and resilient software systems.

If your organization is exploring AI assisted development but wants to maintain strict quality and security standards, Twendee helps enterprises integrate AI coding tools into controlled development pipelines with governance frameworks, automated testing, and engineering oversight. Learn more about how Twendee supports scalable software delivery atFacebook, X, and LinkedIn.