AI pilots are easy to celebrate. A demo works, a proof of concept gets internal attention, and leadership starts to imagine a much bigger rollout. The problem appears in the next phase. Gartner predicted that at least 30% of generative AI projects would be abandoned after proof of concept by the end of 2025, while McKinsey found that although 92% of companies plan to increase AI investment, only 1% consider themselves mature, meaning AI is fully integrated into workflows and producing substantial business outcomes. That gap is the real story behind many AI implementation challenges.

What stalls these projects is usually less dramatic than model failure. In most enterprises, the bigger issue is that AI has to live inside real systems, real permissions, real data pipelines, and real operating routines. Gartner says 60% of AI projects unsupported by AI-ready data will be abandoned through 2026, while IBM’s 2025 CEO study found that only 25% of AI initiatives delivered expected ROI and only 16% scaled enterprise-wide. The lesson is clear: the hard part of enterprise AI is rarely the model alone. It is the messy work of integration, governance, and execution.

Why AI Implementation Challenges Start After POC

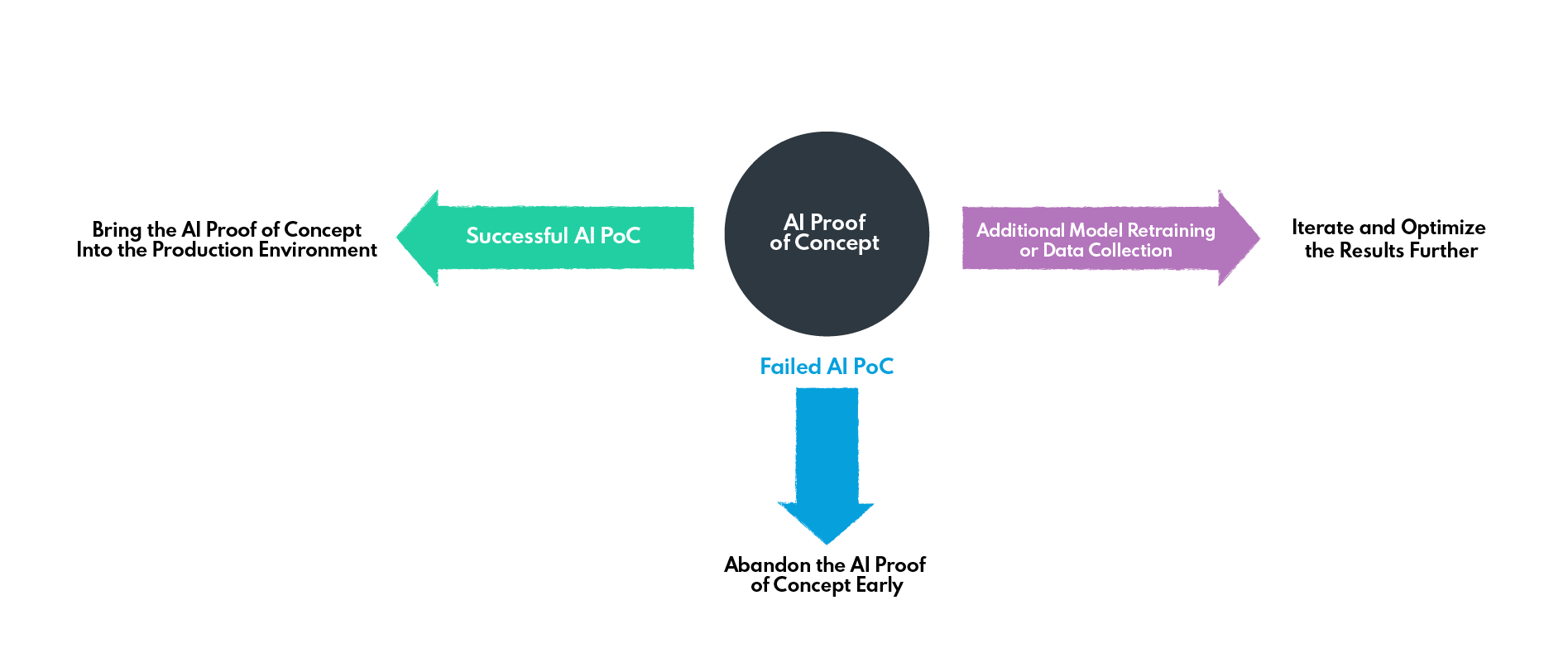

A proof of concept is usually built in a protected environment. The data is cleaner, the scope is narrower, the number of stakeholders is smaller, and exceptions are easier to ignore. Production is different. Once AI enters a real enterprise setting, it has to work with fragmented data, legacy platforms, security controls, approval logic, latency constraints, and business accountability. That is why many teams overestimate readiness when a pilot performs well in a sandbox. Gartner’s 2025 guidance makes this especially clear: through 2026, organizations are expected to abandon 60% of AI projects that are not supported by AI-ready data (Gartner).

The same pattern appears in executive-level data. IBM reported in its 2025 CEO study that only 25% of AI initiatives had delivered expected ROI, and only 16% had scaled enterprise-wide (IBM). Those numbers suggest that the barrier is not enthusiasm or experimentation. It is the difficulty of turning isolated pilots into dependable AI production systems.

AI proof of concept path to production or failure (source: nexocode)

The same pattern appears in executive data. IBM found that 72% of CEOs see proprietary data as key to unlocking AI value, yet 50% say the pace of recent investments has left their organizations with disconnected, piecemeal technology. When AI is added on top of that kind of environment, the result is often another isolated layer rather than a durable capability. In practice, many AI deployment challenges are symptoms of architectural fragmentation that already existed before the AI project started.

Many organizations run into AI implementation challenges not because the model underperforms, but because the business is still unprepared at the system, data, and workflow level. That broader readiness gap matters just as much as technical capability, as explored in why most companies are still not ready for AI adoption.

Why AI Implementation Challenges Are Really Integration Problems

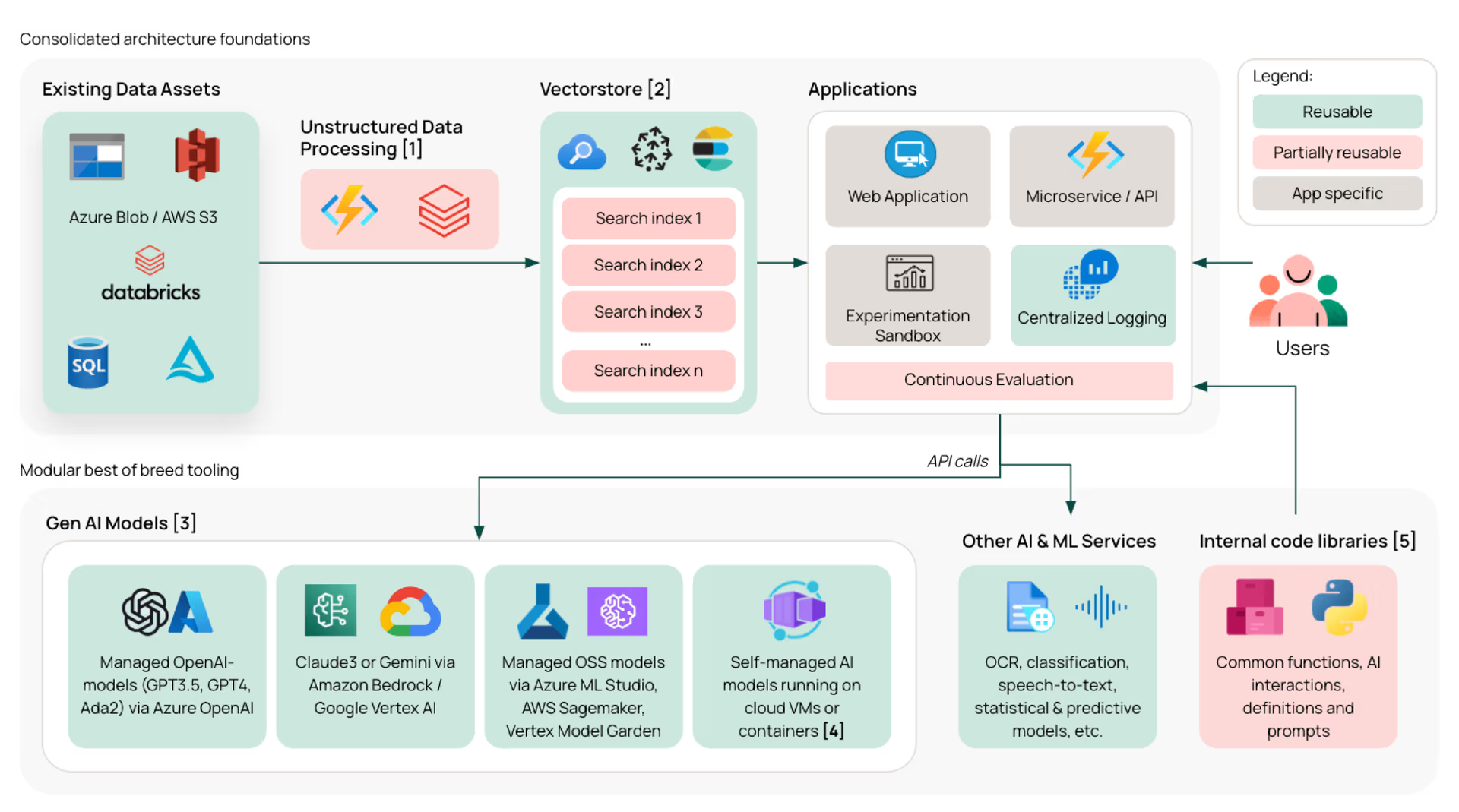

The most common misunderstanding in enterprise AI is treating implementation as a model problem. In reality, it is usually a system design problem. A model only becomes useful when it can read the right context, connect to the right systems, respect the right permissions, and produce outputs that fit a real workflow. McKinsey’s 2025 State of AI found that organizations capturing more value are rewiring business processes, embedding AI into workflows, and tracking KPIs for AI solutions rather than treating AI as a separate innovation layer (McKinsey).

Enterprise AI integration architecture for production systems (source: futurice)

That is why many AI deployment challenges are not really about deployment in the narrow technical sense. They are about what happens before and after the model runs. If AI cannot connect to enterprise data, cannot trigger actions inside internal systems, or cannot operate within a defined review path, it remains an interesting tool rather than a production capability. IBM’s 2026 guidance on AI integration states that enterprises frequently run into compatibility issues, technical problems, and disruption to business operations when trying to integrate AI into existing environments (IBM).

A more accurate way to frame enterprise AI implementation is this: the model is only one layer, but the business experiences AI through systems and operations. The organizations that move beyond pilot stage are usually the ones that redesign the workflow around the AI use case instead of placing the model beside the workflow and hoping adoption follows. McKinsey and AWS both point in that direction, even from different angles: one emphasizes workflow embedding and KPI alignment, the other emphasizes integration into operations and infrastructure (McKinsey; AWS).

How to Solve AI Implementation Challenges in Production

A stronger implementation path starts with the workflow, not the model. The better question is not “Which model should be used?” but “Which operational bottleneck is worth redesigning, and what systems must AI connect to in order to improve that workflow?” That framing changes the project from an experimentation exercise into a real production initiative. Gartner’s data-readiness warning, IBM’s ROI findings, and McKinsey’s process-rewiring research all support the same conclusion: value appears when AI is built into operating environments, not tested outside them (Gartner; IBM; McKinsey).

In practice, that usually means five things matter more than most teams expect:

defining the business KPI before selecting the model

auditing the systems, data sources, and permissions AI will depend on

designing fallback logic, human review, and logging from the start

integrating outputs into real internal platforms or workflows

monitoring usage quality and business impact after launch

This is why the core of AI integration challenges is operational, not theoretical. The technical model may work, but enterprise value only appears when the surrounding system is ready to support it.

The operational payoff can be real when that integration is done well. In the World Economic Forum’s 2025 report, one hospital-services deployment used machine learning for discharge planning across 12 hospitals and increased daily discharge rates by 4.6% in six months. The gain came from embedding AI into a real operational process, not from running the model as a disconnected experiment (World Economic Forum).

In many cases, this is where implementation partners make the biggest difference. Enterprises rarely need another isolated AI demo. They need support in moving AI from proof of concept into production, integrating it into existing software environments, and aligning it with real operating workflows. Twendee supports that transition by helping businesses carry AI from POC to production, while also embedding AI into enterprise software systems and day-to-day operational processes where it can create measurable value.

Conclusion

Many AI projects fail before production not because the model is weak, but because the organization mistakes a successful pilot for a production-ready system. The real challenge is integration: connecting AI to data, workflows, internal systems, governance rules, and operational accountability. That is why AI implementation challenges are fundamentally about systems and operations, with the model as only one part of the stack.

For businesses trying to move from POC to reliable AI production systems, Twendee supports the journey end to end: helping deploy AI from proof of concept to production, integrating AI into enterprise software environments, and embedding it into operational workflows where it can deliver real business impact. Connect with us atFacebook, X, and LinkedIn.